The broken promise of voice assistants

While voice assistants were marketed as the next big thing, most haven’t lived up to their simple promise: “You can ask me to do things, and I will.” The need to speak in rigid phrases rather than naturally, to say things within a specific time window, no tolerance for accents, background noise or follow up comments and poor error handling have rendered the experience… less than enjoyable to put it mildly.

We’ve all been there- modulating our voice, slowing down our speech, choosing simpler words- like we’re talking to a 95-year-old grandma, just to get the machine to “understand.” The experience? Infuriating at worst, meh at best.

And when a product consistently fails to meet its users’ expectations, trust erodes. The goodwill and readiness to give the benefit of the doubt soon gives way to reservations and reluctance to use the system at all. People stop trying. They look for workarounds or abandon it altogether. What’s fascinating is that this isn’t tied to one particular product or brand. The voice assistants’ failures are universal- and so is the loss of trust.

As a designer working in automotive UX, I’ve been exploring where voice succeeds- and where it’s still stalling in this particular context. When we talk to drivers, the feedback is consistent and worrying: “It never works, so I don’t even try anymore.” The question I ask in this article is: how can we rebuilt this lost trust and and why is it worth doing in the first place?

Why voice isn’t always the answer

Let’s set one thing straight: voice isn’t the silver bullet and it will never universally replace graphic interfaces.

The factors affecting suitability of the voice interface are many: social context, comparison needs, privacy and accessibility to mention a few. While certain scenarios render voice interfaces inappropriate (would you rather see the available routes on your sat nav, or have them explained to you verbally?), others make it the perfect choice.

“Hey Siri, fast forward 20 seconds” is a command I’ve uttered hundreds of times- in an effort to skip the annoying intros and ads, whilst chopping spuds for a roast and listening to my favourite true crime podcasts.

Voice thrives in environments where our hands and eyes are busy, tasks are short and habitual, where we can enjoy relative privacy and where safety or accessibility make screen use impractical.

This makes cars - and driving them- the perfect setting for voice. As much as doing a search on your iPad with fingers covered in olive oil is less than practical, fiddling with the infotainment when your eyes should be on the road might have much graver consequences.

Why voice failure matters most in cars

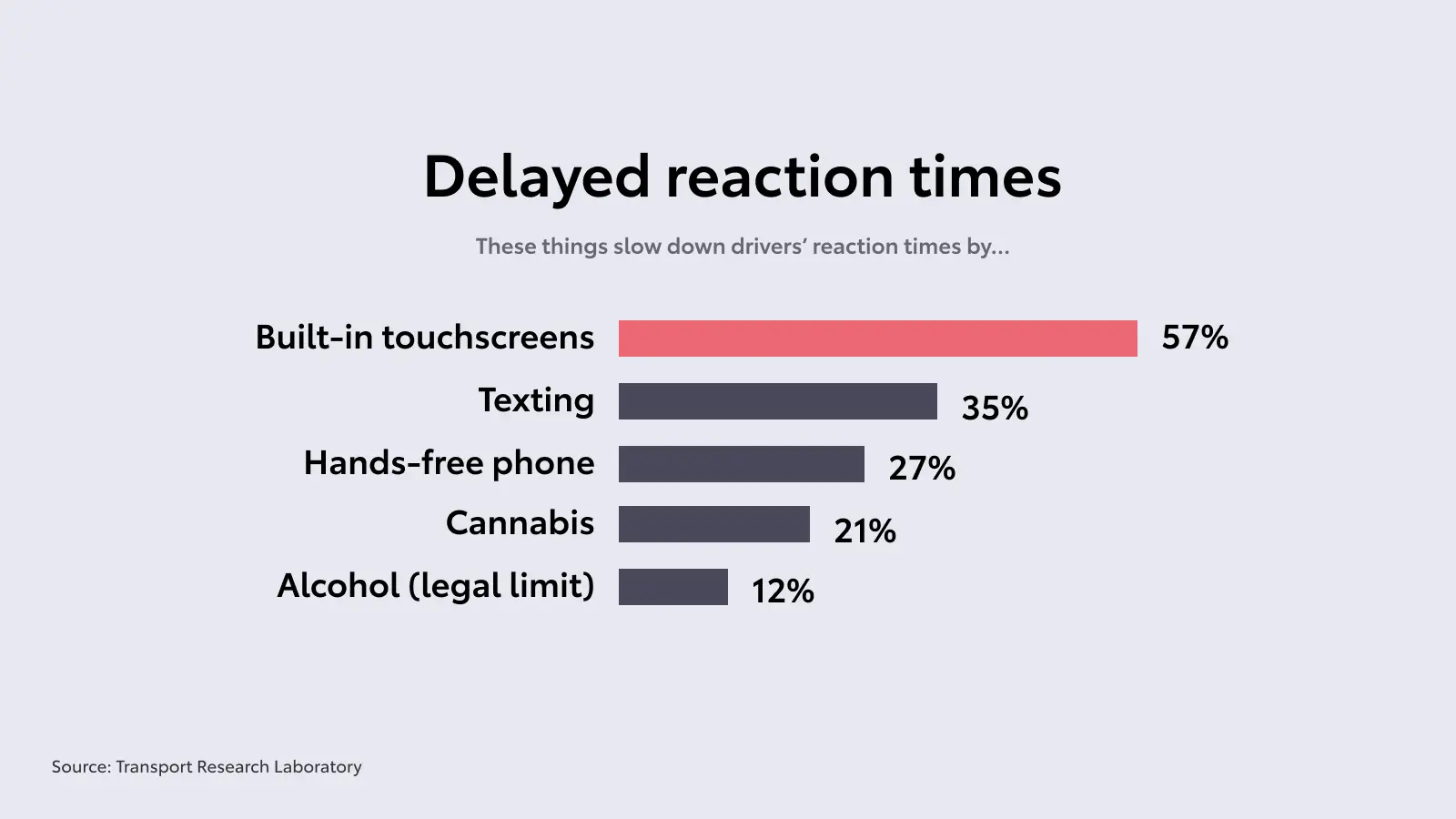

Whilst in many contexts the choice between visual or audio input is a matter of pure convenience, the stakes, when driving, are much higher: safety of the driver, passengers and others around them. According to Transport Research Laboratory, "drivers who use built-in touchscreen technology whilst driving are more distracted than if they were driving under the influence of alcohol, drugs or using a handheld phone to call or text.” with reaction times slowing down by shocking 57% (compared to 12% when drunk driving for example).

Over a third of fatal crashes in 2023 was caused by distracted driving (which includes in-vehicle distractions such as screens and devices). Voice interfaces hold the power to minimise distractions and reduce cognitive load, and with all that in mind, the conclusion is clear: voice should be the main way we interact with cars. So why isn’t it? It’s certainly not because of exclusivity or lack of access to the technology itself: in-vehicle voice assistants have been around for a quarter of a century.

For some, habit still plays a role: many drivers are used to manual controls or screens, so they don’t instinctively reach for voice. But most modern users understand the value of voice intuitively and still choose not to use it- the reason being past failures and poor experiences.

The mismatch between the promise of an intelligent assistant in such a safety-critical context and the disappointing reality causes people to disengage and… revert to screens. It’s ironic that the place where voice can bring the most value- save lives- is also where people trust it the least.

Things are changing

With the advent of AI, the VA technology has undergone an enormous shift. The pain points which have made users lose interest and turn away from voice in the past, are gradually being done away with. The days of rigid commands and phrasing will become a thing of the past. Behold, we’ll be free to say “I’m cold” instead “Hey car, set temperature to 23 degrees”. We’ll be free to mumble, pause, “hmmm” and “ummm” to our hearts content and the system will still most likely know what we mean. If it won’t, it will ask us to clarify, but won’t lose the context of what was said previously. Like when we’d say “actually, the one with the cafe” after already choosing which charging station we want to go to.

But there’s still work to do, because while the old-world voice assistants placed the burden of adaptation on users and it’s true that AI removes much of that burden, it doesn’t mean that trust will magically return.

Winning back trust

In UX, trust lives in what’s often described as a reservoir of goodwill- a finite pool of patience, curiosity, and forgiveness that users extend to a system. On first use, the pool is usually full but it drains with every failed or unsatisfying interaction, and in case of voice assistants… well, it’s been draining for a mighty long time.

This is why giving a feature or system “another try” is harder than first adoption. Re-entry starts in emotional debt. As humans, we remember failures far more readily than successes- it’s just how we’re wired. Past failures lower expectations before the system has even had a chance to perform. They make us approach it (if we even care to approach it) defensively, with less patience, and an assumption that it will disappoint. Once the conclusion that “it never works” forms, isolated improvements are not enough to change behaviour. Re-entry requires undoing a learned conclusion, not just demonstrating technical progress.

So how do we convince users to give voice- the new, conversational, context-aware voice that can make driving safer for everyone- another go? How do we clearly differentiate this generation of voice from what came before? The answer isn’t louder claims about intelligence, or treating voice as a novelty feature.

Voice isn’t “mood lighting” or a cutsey avatar on the screen. It’s more like a seatbelt for our hands and eyes- a quiet safety layer that allows us to do what we need to do while keeping our attention on the road.

Knowing the true value of the feature, rebuilding trust will require deliberate, careful design: intentional onboarding, low-risk moments to try voice early, and space for users to recalibrate their expectations through experience rather than promises.

As designers, our role is to create those moments- let people Genchi Genbutsu- see for themselves- that this is different. Winning back trust won’t be easy, but in a context where attention and safety are inseparable, it’s work worth doing.

Written by